Robotic Manipulation

in Densely Packed Containers

UW + Amazon Science Hub

Real-world Challenge & Innovative Approach:

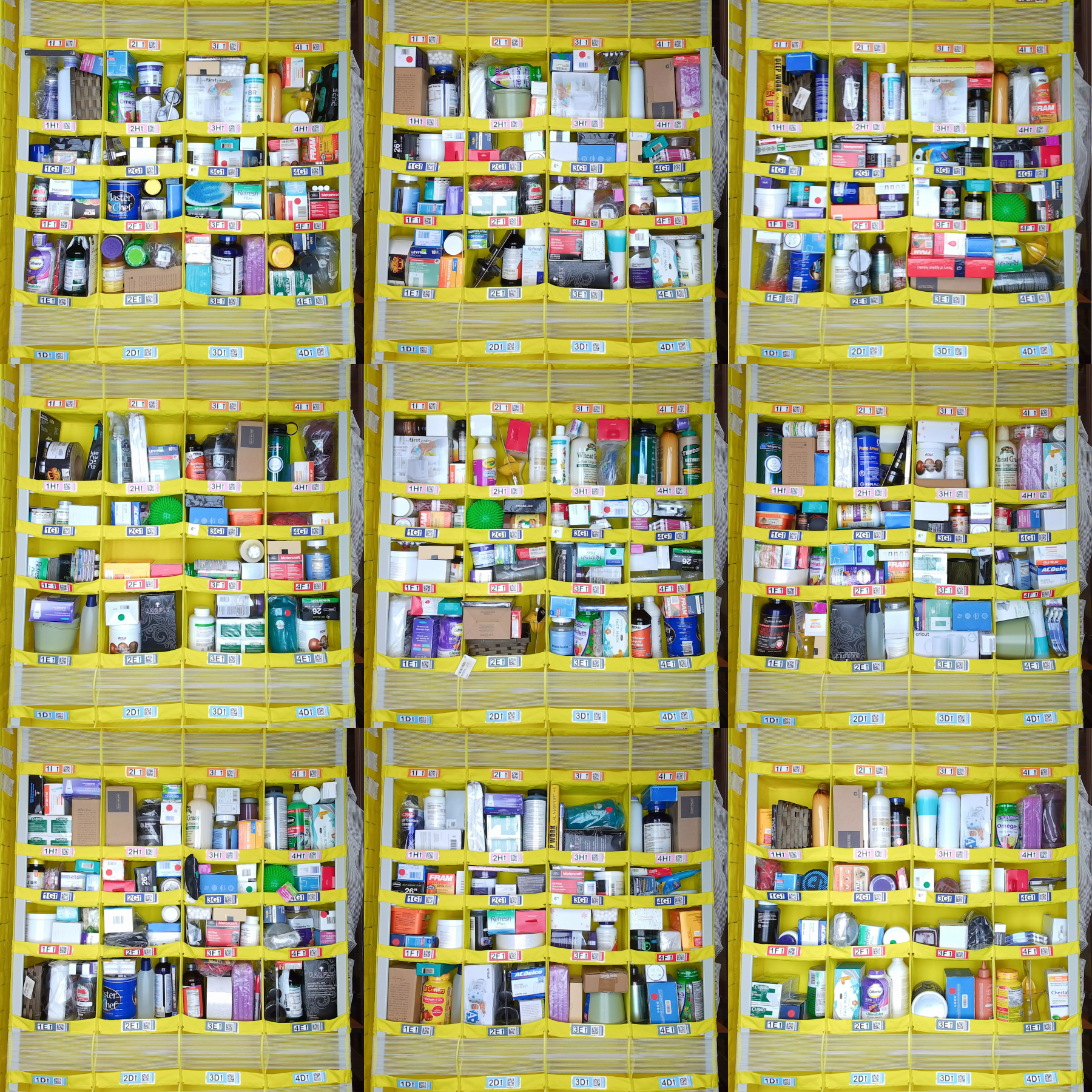

Revolutionizing Amazon’s Fulfillment Centers, our team at the UW + Amazon Science Hub Project “Robotic Manipulation in Densely Packed Containers” is pioneering the automation of the picking process.

We’re navigating through the complexity of densely stocked bins with an array of items, leveraging Robotic Manipulation, Computer Vision, Sensor Systems, Human-Robot Interaction, and Reinforcement Learning to enhance efficiency and innovate supply chain management.

Contribution:

During my research assistantship I have accomplished the following results:

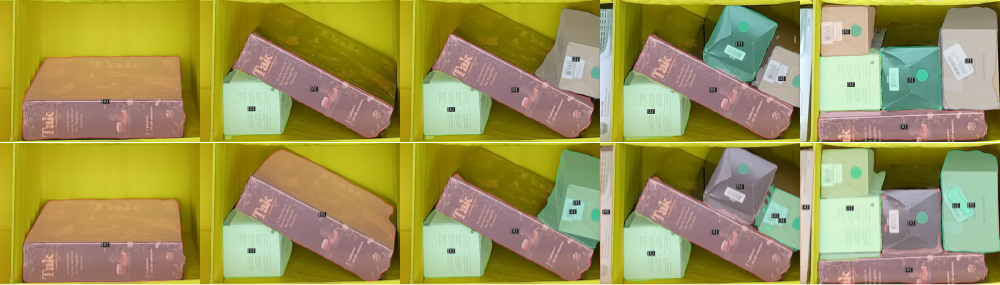

Synthetic Image Generation:

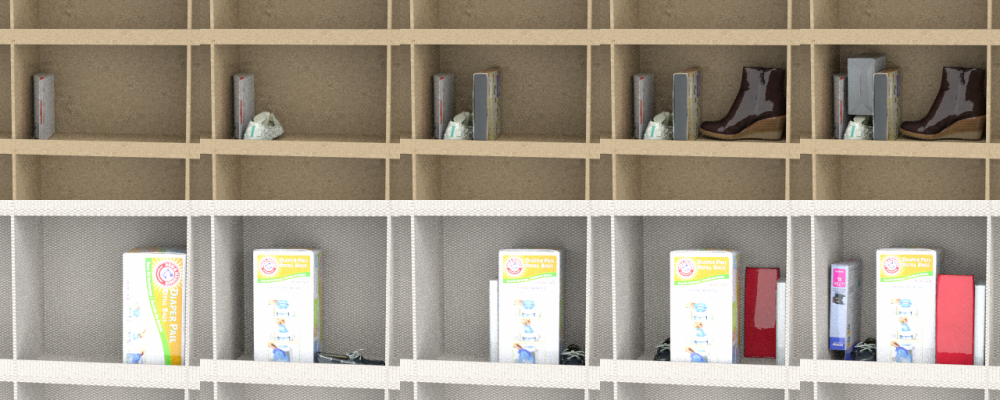

Generated using NVISII and Scanned Objects by Google Research.

Objects:

- incrementally added into scenes, resembling a process of stowing

- randomly chosen from the given train set which do not overlap with the evaluation set

- placed depending on their shape (box, bottle or irregular), the bounding box similarity to the bin dimensions, and a type of packing

- oriented based on remaining free space, optimality for packing with some randomness to prevent the network from overfitting

There might some variations in the images such as

- max number of objects within each scene, and max number of scenes

- objects relative placement (w/ & w/o stacking, partial/full occlusion, shuffled order)

- object transformation (small perturbations in orientations, rotation by 90deg and/or 180deg)

- various bin configuration (fabric texture, size aspect ratios, neighboring bins as a background)

- different view angles and light brightness, representing different scenarios of bin vs camera mounting

There are several configurations of stacking items in bins:

- simply introducing new objects without any rearrangement in the previous scene

- adding a new object with shuffling the order of placement of all scene objects

- randomly reorienting the previous objects before adding new one

- a combination of replacement and reorientation

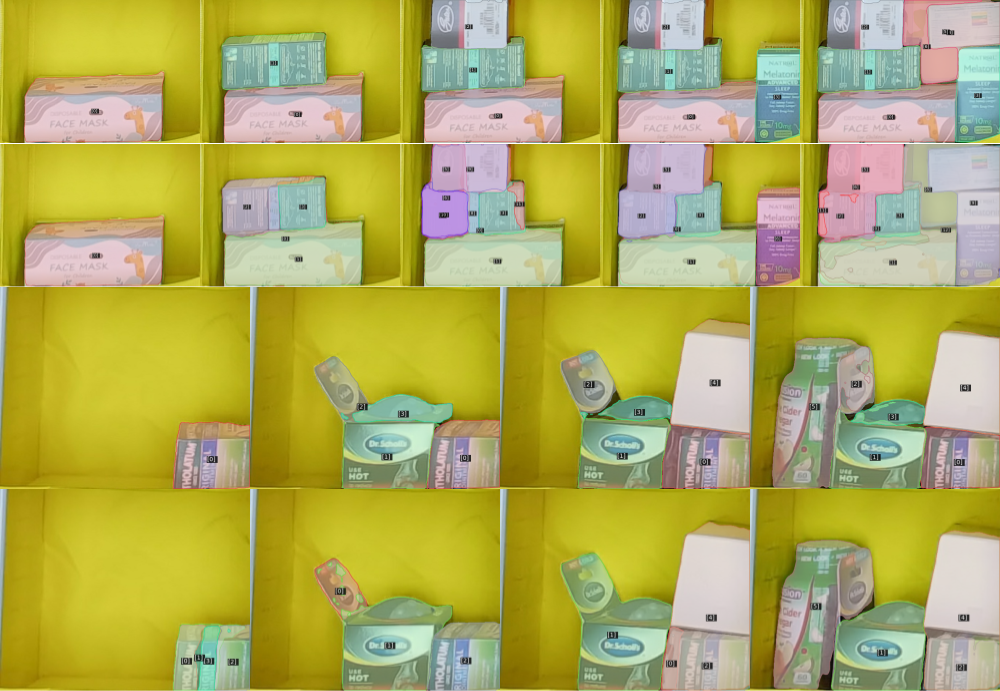

These images are used for training a novel framework “Discrete-Frame Segmentation and Tracking of Unseen Objects for Warehouse Picking Robots”

Evaluation in Real-World Scenarios:

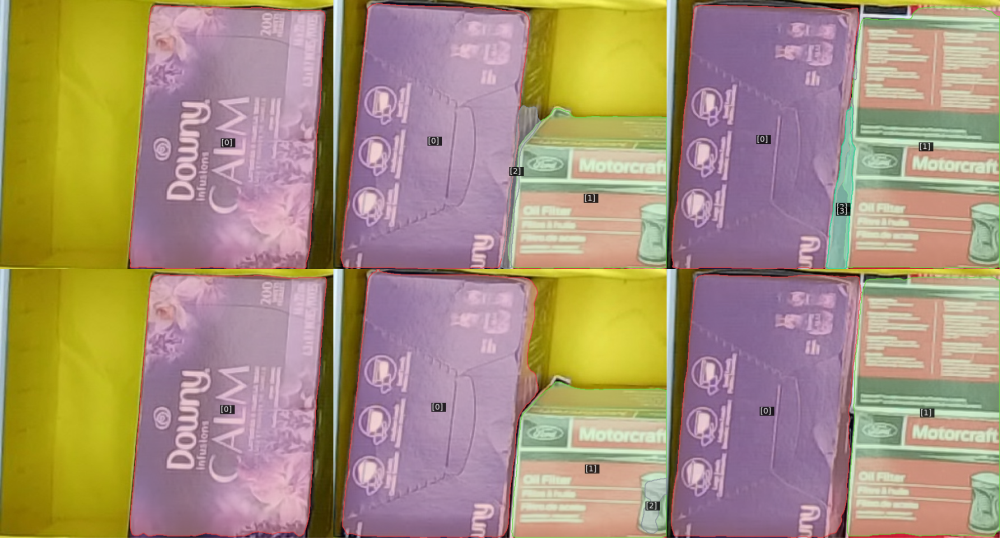

The average precision of the model trained on the expanded 140K-image dataset has been improved from 0.424 to 0.646 overall,

and from 0.336 to 0.573 for stacked bins.

- The new model improves the performance by exluding failures to detect objects placed on top of each other by segmenting them into a single mask.

- The new model is less prone to oversegmentation, although it might do so in highly packed bins or for objects of contrasting parts.

- Both models are not capable of differentiating several identical or similar objects such as cardboard boxes, and therefore fails to track.

Also, as the new model is trained in highly packed bins where thin objects like towels/napkins are placed vertically because of optimality (which is computed according to object vs bin bounding boxes similarity mentioned above) to store and pick, it might oversegment in such scenarios.

To increase the accuracy further, we need to improve segmentation and tracking, especially in case of identical objects.

So our team decided to work on improving the architecture instead of refining the training set. And now I’m investigating how to integrate Segment Anything Model (SAM) and/or Visaul Language Models like GPT-4.

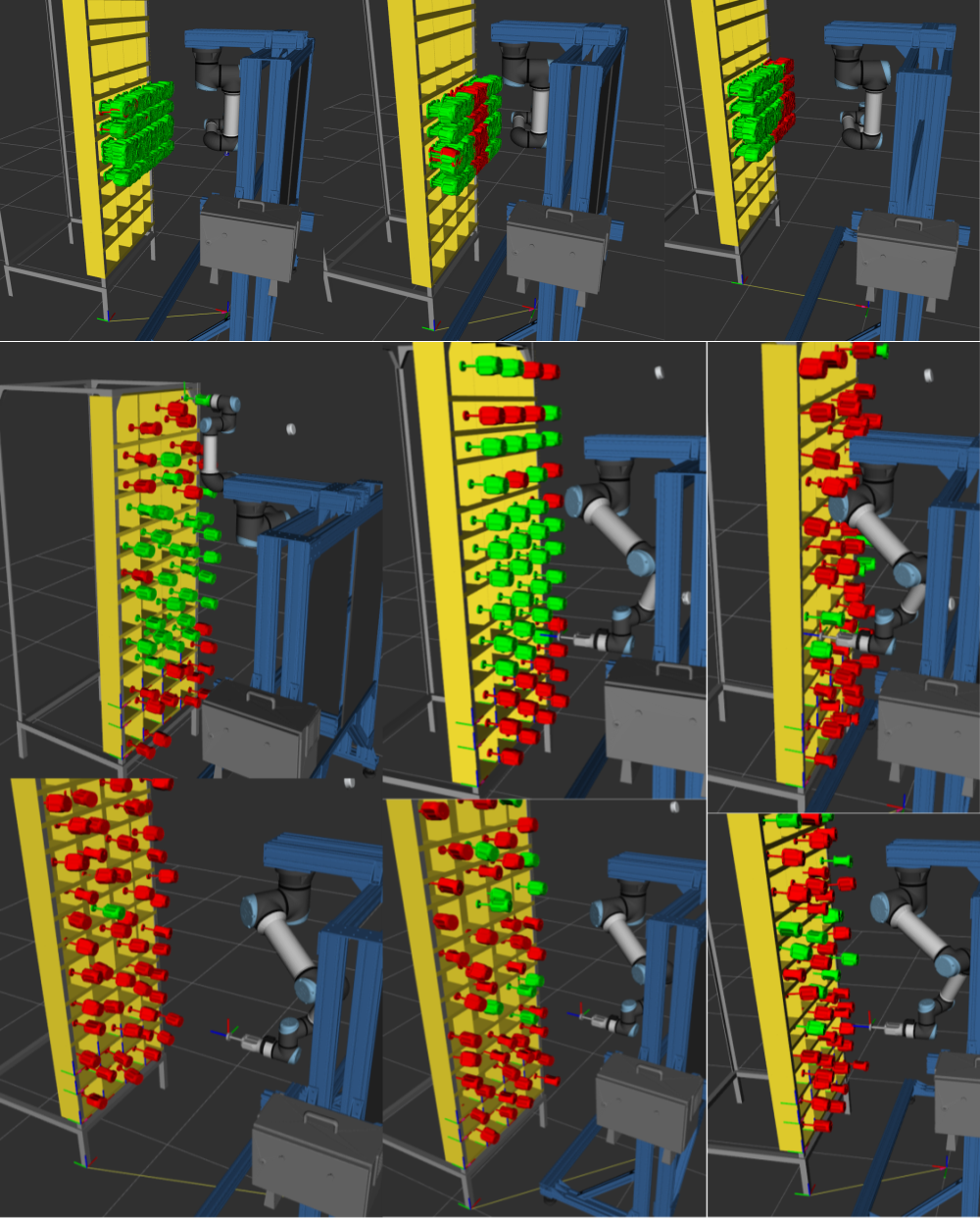

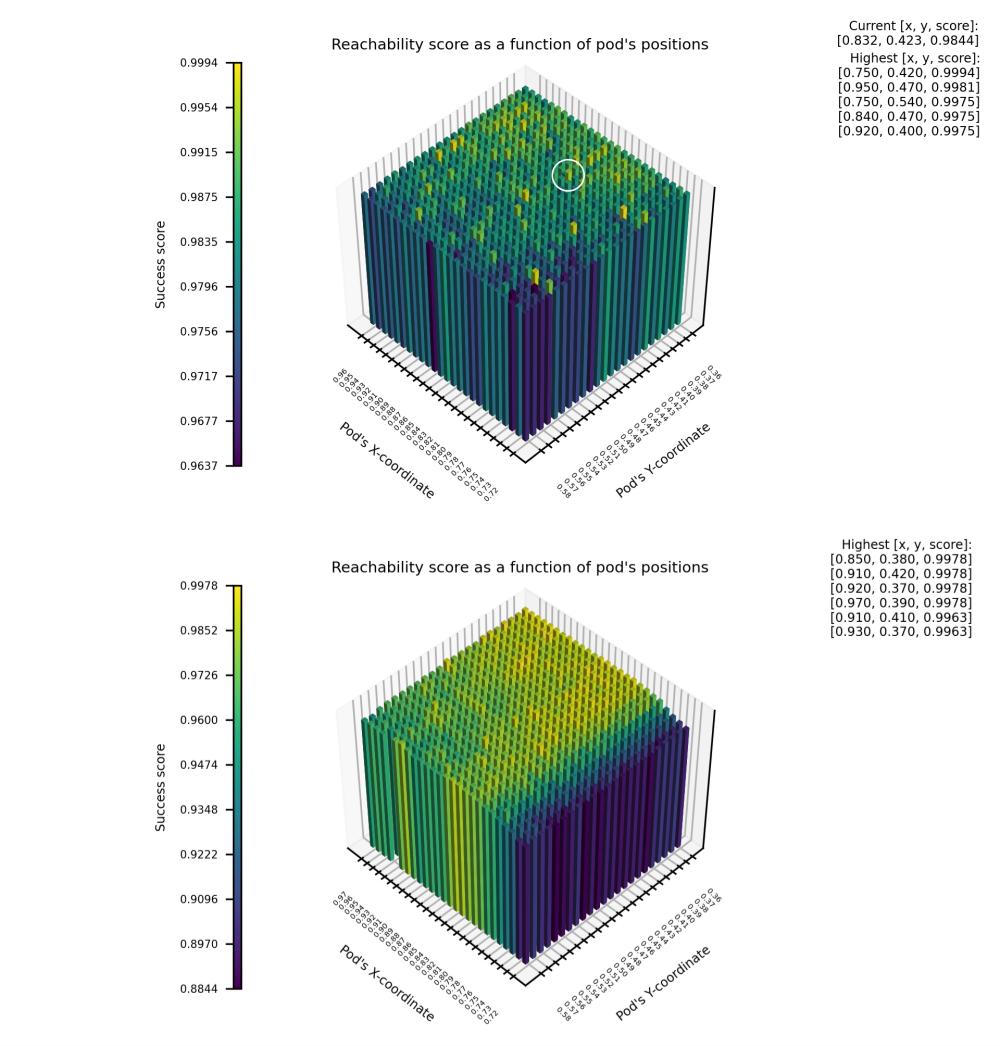

Reachability Analysis:

To find an optimal pod position relative to the robot workstation, I tested the robot’s reachability, i.e. moving the end-effector from the home pose (item dropping point) to a pre-grasp pose (one random pose in front of the given bin).

Red markers mean the poses are unreachable due to kinematic constraints (rotation axes limits or lengths of links). Green - successful motion plan

1st row depicts currently tested 16 bins. 2nd/3rd rows shows the entire pod

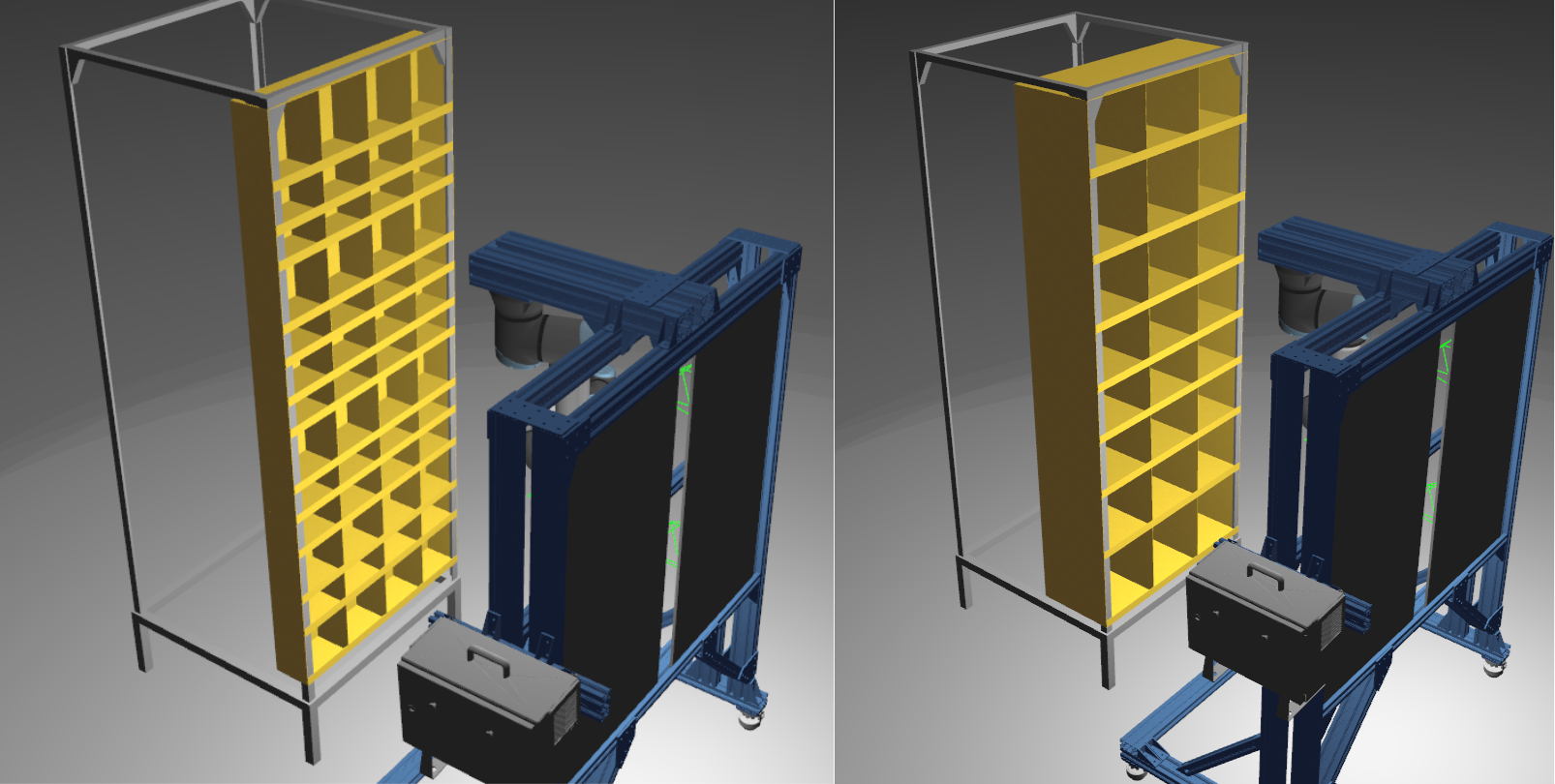

To run as accurate tests as possible, I started with enhancing simulation environment precision and robustness by revising URDF/Xacro/XML files and creating a single source for all pod models.

Gazebo additionally includes collision scenes, which is set up by MoveIt in RViz.

Pod 1: 13 rows x 4 columns (right). Pod 2: 8 rows x 3 columns (left).

Then, I restructured the launch process by replacing pod model spawning from a launch file to a Python file, which allowed to run tests for different pod positions (x, y) in for loop instead of re-launching the launch file manually each time.

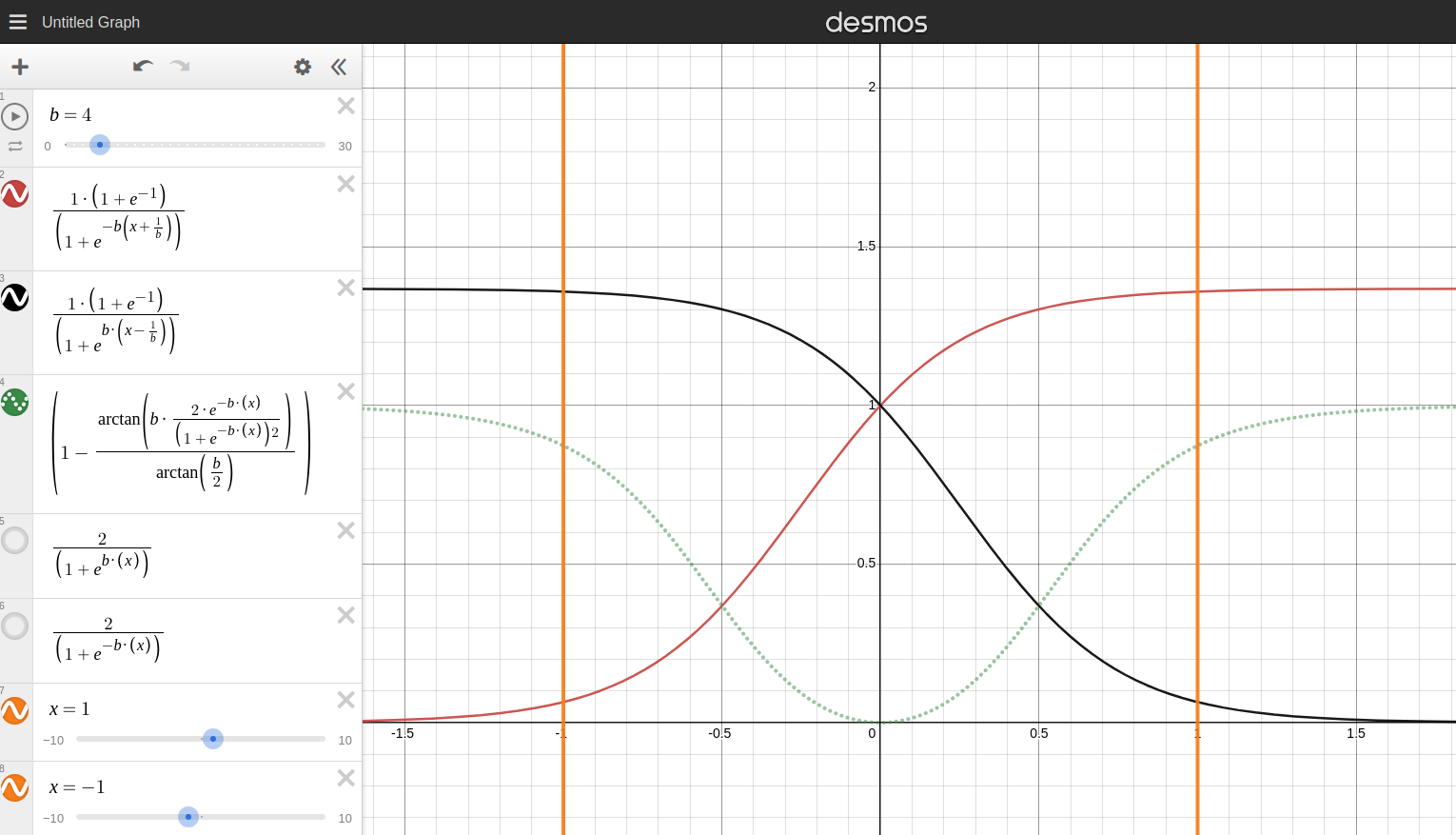

All pre-grasp poses used to be perpendicular to a bin face, while in reality objects inside the bin could be picked from different angles too.

So I tried for the end-effector orientation sampling two methods:

- symmetrical sigmoids (unsuccessful because it converged pre-grasp poses to the center)

- modified uniform (which is close to reality as it filters out orientations that might cause collisions with bin walls when the end-effector moves from pre-grasp pose to grasp objects inside the bin).

Various orientations converge to the center of the bin (x=0), which is not true in reality as peripheral objects could be picked somewhere close to the bin boundaries (x=1).

Later on, this modified uniform sampling method caused another issue related to collision of the end-effector with a target bin’s walls when the robot moves from home pose to a pre-grasp pose. And the reason was because of 2mm thin bin’s walls which were sometimes skipped.

So I solved the issue by redefining a target link for which the path is computed in MoveIt, increasing C-space discretization and collision-checking frequency in MoveIt motion planning.

Overall, I came up with analysis of 800 pod poses (400 for each of two pods, with 1 cm step in x,y), and showed that the reachability failure could be improved from 20/1600 to 1/1600.

Also, the trend is shifted towards further distances with the pod center close to a plane with the robot’s origin (y=0.470m). Corner cases include close positions, but those are with high variance and thus unstable to small disturbances in positions.

The current position is highlighted and less than some other possible positions with higher scores.

For fairness, I should admit the reachability test did not take the picking process (moving from pre-grasp pose to inside the bin) into account due to computational resources vs the problem urgency.

And when we applied the test result in the real world, the x-coordinate was shifted slightly (~ 3-5 cm) towards the robot which is expected.